In the previous tutorial on data warehousing, we learnt about staging area, DSO, ETL and data mart.

In this tutorial, we would study about normalization and data modelling required for building up the data warehouse.

Dimensional modelling is a good technique to deliver the data to the users of data warehouse. It helps in making the data warehouse simple and understandable. Data models that are started in a simple way usually end in simple way while those that are started in complex way end in complex way. The data models that are complicated run slowly and rejected by the business users.

Data Model: It is applicable to both existing system environment and data warehouse environment. Building a data warehouse data model starts with corporate data model. The corporate data model constitutes only primitive data. To this data model, performance factors are added while transportation of the model to an existing system environment. It is basically used as operational data and does not require changes. When this data model is applied to data warehouse, operational environment is removed.

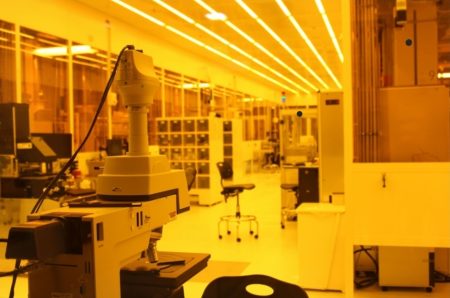

Time element is added to the key structures and derived data is also added in the corporate data model. Afterwards, stability analysis is done. In stability analysis, data attributes are grouped. From one large table, three tables are created on the basis of stability requirements. For example, data that does not change much is created in one table. Data which changes sometimes is created in another table while data that frequently require changes is grouped in the other table. An example of stability analysis is illustrated in the above diagram.

Levels of Data Modelling

There are three levels of data modelling viz. high-level data modelling, mid-level data modelling and low-level data modelling.

1) High-level Modelling: It is also called as Entity-Relationship level. Entity name is surrounded by oval shape. Relationships among the entities are shown using arrows while number of arrows represent cardinality.

Boundaries of the data model depict the scope of integration of the entities. Scope of integration determines part of the data that would be represented as entities and would reflect in the high-level model.

2) Mid-level Modelling: After the creation of high-level model, mid-level model is created. It is also referred to as DIS. Each entity created in the high-level model would lead to a mid-level model. All the mid-level models are generally not developed at once but can happens in rare cases. At first, there is an expansion of mid-level model for one entity while other entities remain static.

There are four constraints of mid-level data viz. primary group of data, secondary group of data, connector and type of data. The relationship between entities identified in high-level model is represented using a connector in the mid-level model.

In the mid-level model, common data is represented in the left while unique data is kept at the right side. For example, data and time are common among all transactions and hence are kept to the left side in the model.

3) Physical Data Model: The mid-level model is converted to physical data model after addition of key and characteristics. The data appears like a series of tables in the model which are also called the relational tables. Element of time is also added to the key structure. An input/output activity in association with computer is also incorporated to the model. This I/O system affects the performance of data warehouse.

Normalization

The data model results in a series of tables that contain keys and attributes. Merging the tables is required as it leads to minimal consumption of I/O. While fetching the query in tables which are not merged, performance and efficiency are affected. Other things are to index the tables and create arrays of data.

While retrieving the data, it would be required to search the data in a single row of array and hence a single I/O would be used. Creative index is also required while transferring the data from operational environment to data warehouse environment. Management of referential integrity is also important.

Continue reading the next tutorial for DW tool: SAP BI (Part-1).